Say there are between 4 and 40 AI agents acting on behalf of every human in an enterprise. The ‘human-in-the-Loop’ safety math simply doesn't work at large scale. At a scale of millions, manual human oversight becomes physically impossible, and without an autonomous mechanism these agents operate as unmanageable entities in a legal and operational vacuum. Humans will either rubber-stamp at speed or revolt against the tedium — The need for viable technologies is now driving a billion dollars of infrastructure investment in Proof of identity AND Poof of Intent platforms.

Mastercard, Visa, Google AP2, OpenAI and Stripe and Releaf Financial Inc are all potential providers of this trust layer to validate identity and intent. I can see the potential partnerships to deliver the vital trust layer if Digital Agent chaos is to be avoided with standards that are practical for enterprises, institutions, public sectors.The article below analyses the likely outcomes over the next few years and the infrastructure framework they operate within.

What can we expect in mature Western markets, fast growing Africa's Mobile Money innovation, dynamic Latin America , innovative AsiaPacific? Will one dominant infrastructure emerge or will diverging infrastructures complicate matters? Let's review the main infrastructure levels.

- The payment network: the current gateway giants

- Identity: who is behind the agents?

- Proof of Intenet: the heart of the matter

- Oversight and behavioural trust

- Blockchian audit immutability as a guarrantee

- Potential fault lines

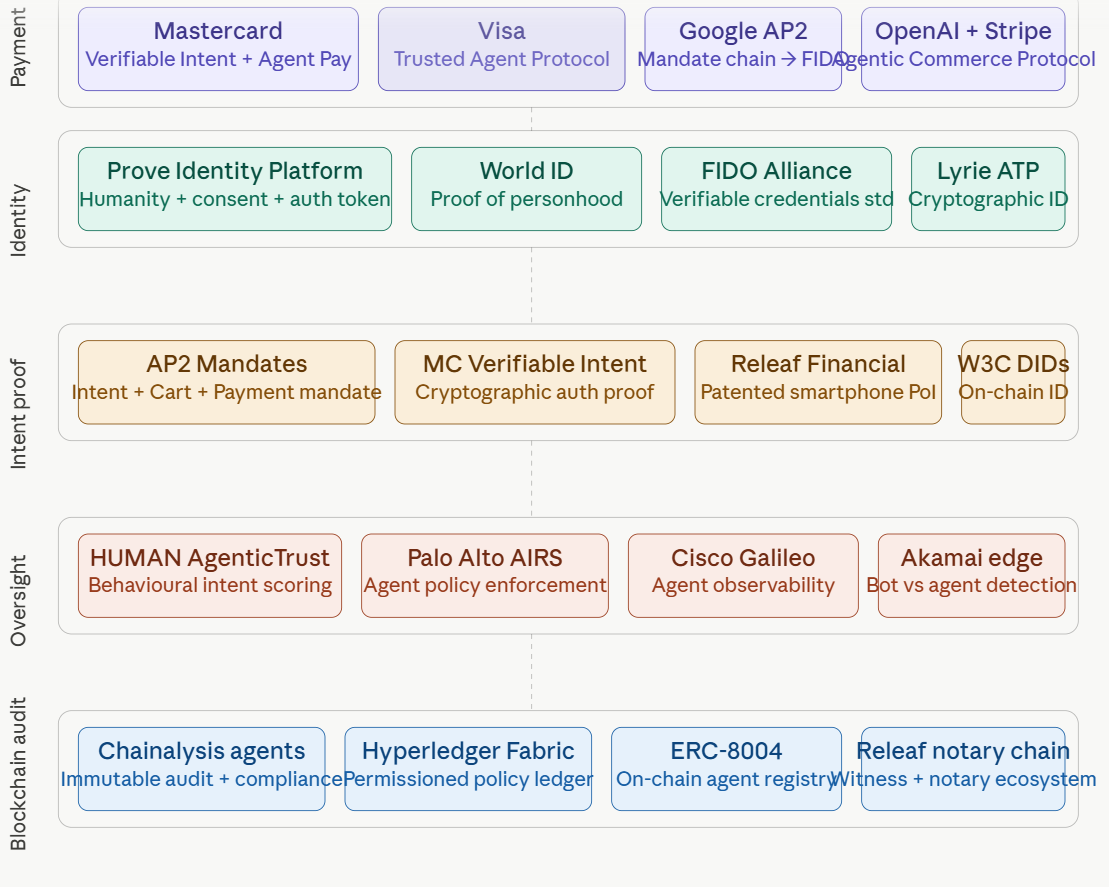

The overlapping infrastructure layers

The diagram below is illustrative, not comprehensive and the scene is changing fast!

Layer 1 Payment network -the gateway giants

Mastercard, Visa and Google AP2 are the gorillas in the market

Mastercard's Verifiable Intent standard introduces a new trust layer for agentic AI, providing cryptographic proof of authorisation for consumers, merchants, and issuers, In the coming months it will be integrated directly into Mastercard Agent Pay's intent APIs. Verifiable Intent is AP2-compatible and co-developed with Google, creating a tamper-proof log of user-authorised agent actions to ensure accountability, and is being donated to the FIDO Alliance — which is the right governance move to avoid proprietary lock-in.

Visa launched its Trusted Agent Protocol in October 2025. It establishes a foundational framework for agentic commerce, developed in collaboration with Cloudflare, enabling secure communication between AI agents and merchants at every step of a transaction. It was designed to help merchants distinguish between malicious bots and legitimate AI agents acting on behalf of consumers. The protocol carries three types of cryptographic information: agent intent, consumer recognition, and payment data in the merchant's preferred format. Akamai subsequently joined, adding edge-based behavioural intelligence.

Google AP2 perhaps the most technically complete open standard builds trust using Mandates — tamper-proof, cryptographically signed digital contracts that serve as verifiable proof of a user's instructions, covering both human-present and human-not-present scenarios. Google has since donated AP2 to the FIDO Alliance and released v0.2, which introduces "human not present" payments, allowing agents to securely execute payments autonomously based on pre-authorised user instructions. The protocol was built with over 60 partners including Mastercard, PayPal, Adobe, Salesforce, and Coinbase.

Layer 2: Who is behind the agents?

Prove Identity Platform, launched in April 2026, is an identity-layer entrant. It transforms identity from a one-time verification event into a persistent validation of trust, where people, businesses, and AI agents must all be verified and trusted in real time. Its agentic solution suite embeds cryptographically signed consent directly into the identity token so it travels with every agent action — a single token call is claimed to deliver proof of humanity, proof of consent, and proof of authorisation simultaneously.

Lyrie.ai exited stealth mode in May 2026 with its Agent Trust Protocol (ATP). The open cryptographic standard for AI agent identity verification, and has gained acceptance into Anthropic's inaugural Cyber Verification Program. The identity problem it addresses is concrete: when an autonomous agent reads email, executes code, moves money, or signs a contract on behalf of a human operator, the system receiving that action has no reliable way to verify who authorised it, what scope that agent was granted, or whether its instructions have been tampered with in transit. It is pre-seed and nascent.

Billions Network already validates human identity and offers a ‘Know your Agent’ module to validate idenity, ownership and accountability. It counts HSBC, The Indian Government and Tiktok amongst its clients and has created over 2m vaidated humans

Layer 3: Proof of Intent the heart of the matter

Industry has converged on the insight that trust in payments must be anchored to deterministic, non-repudiable proof of intent from the user, directly addressing the risk of agent error or hallucination, providing a non-repudiable, cryptographic audit trail for every transaction.

Releaf Financial occupies a distinctive niche here. Its patented approach using latent compute on smartphones, combined with an ecosystem of witnesses and notaries writing proof of intent to blockchain, and is closest to what you might call a "legal-grade" intent record. While the major networks focus on payment authorisation, Releaf's architecture seems oriented toward a broader class of agentic action — more analogous to a notarial system than a payment network. That is a genuinely different and complementary positioning.

Layer 4: Oversight and behavioural trust

HUMAN Security's AgenticTrust gives security and fraud teams continuous visibility into AI agents interacting with their websites and applications. It identifies agents, classifies their behaviour, and enforces rules that govern what agents are allowed to do — distinguishing the well-intentioned from the out-of-bounds. AgenticTrust decisions evolve in real-time based on what the agent does, not just what it is.

Palo Alto Networks' Prisma AIRS 3.0, announced at RSA Conference 2026, and Cisco's Galileo acquisition — an AI agent observability platform announced in April 2026 — are both coming from established platform positions with existing enterprise relationships. These are the "governance layer" players: less about proving original intent, more about monitoring compliance with it continuously.

Realeaf Financial's ecosystem of witnesses and notaries measure the validity that humans have authorised agents to make deterministic decisions within tightly defined and controlled rules. The more complex the decision the higher the level of validation must be applied.

Layer 5: Blockchain audit: immutability as a guarrantee

Chainalysis has introduced blockchain intelligence agents designed around four key principles: data quality, context and reasoning, auditable results with deterministic workflows, and humans in control. For critical decisions, the same inputs, rules, and data should produce the same outcome, so automation stays predictable, consistent, and defensible.

Emerging on-chain proposals such as ERC-8004 extend the authorisation framework to agents themselves by defining on-chain registries for agent identity, validation, and reputation, supporting decisions about which agents are permitted to act, and strengthening trust in machine-initiated actions.

ReLeaf Financial writes all validation proof of intent to blockchain toprovide an immutable record for future compliance processes

6. Strategic Observations: potential fault lines

First, standards fragmentation is a near-term risk. The current period of multiple competing protocols — Trusted Agent Protocol, AP2, x402, Agentic Commerce Protocol — will resolve into a smaller number of widely adopted frameworks, and the competition between Visa and Mastercard on agentic infrastructure will drive continued investment and capability development on both sides. Keep your eyes on the longer term standards that will apply.

Second, the human oversight problem is not solved, only deferred. Some research shows that 44% of enterprise AI leaders have only moderate confidence that AI agents can act autonomously without human intervention, and most organisations lack the governance and oversight layer that makes autonomous agents trustworthy in production. The cryptographic layer proves what was authorised; make sure it guarantee sthat the original authorisation was wise and intended.

Third, Releaf Financial's positioning is structurally distinct from the payment network players. Where Visa, Mastercard, and Google are solving for payment-time proof of intent in commerce contexts, Releaf's witness-and-notary model is better suited to higher-stakes, lower-frequency agentic actions as well as lower-stakes high volume transactions— contracts, consent, regulatory filings — where legal defensibility matters.

These are complementary markets, not competing ones and I expect to see partnerships developing to deliver scalable, operational trusted layers. It will be interesting to see how these may differ in different geographies and how they merge on a global level

Mike McLaren recently analysed the African region and the critical part it will take in building upon the deployment of Mobile Money for millions of people who did and do not have access to bank accounts.Over a decade telcos like Safaricom, MTN, Vodacom, Orange Pay have delivered digital payments via the phones of subscribers in rural and urban areas. Mobile Money has been life-changing for soletraders, farming small holders, small businesses and merchants. They have leapfrogged banks and the mobile money innovation has occured outside the tech vendors of the West. Expect Africa to play a large part in this trust layer development

The double agent is a figure from espionage, a spy working for both sides. AI agents have the same capability: without clear orders and a strict hierarchy, they can end up hijacking a system, triggering an event – or just meandering around in digital purgatory, waiting to be spurred back into action by some divine prompt or hallucination. New data from the Cloud Security Alliance (CSA), a not-for-profit organization committed to AI, cloud, and Zero Trust cybersecurity education, suggests that 82 percent of organizations have unknown AI agents running in their IT infrastructure. Nearly two in three have experienced AI agent-related incidents in the past 12 months. The agentic economy is here, and it brings with it agentic chaos.

https://www.biometricupdate.com/202604/ai-agents-are-already-inside-your-digital-infrastructure

unknownx500

unknownx500