Part 5 of the series: Agentic AI in Insurance Claims: A Practitioner's Reality Check

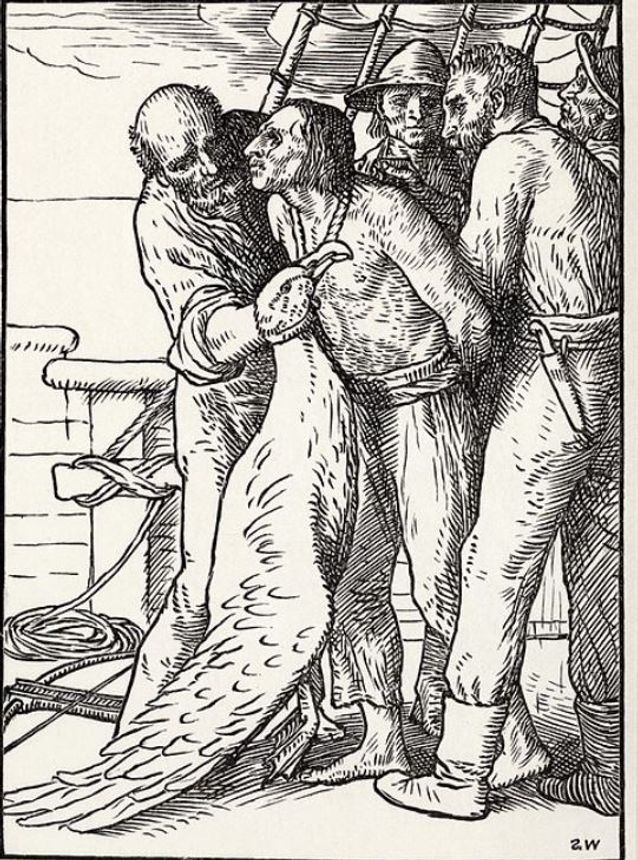

'The Ryme of the Ancient Mariner' by Samual William Coleridge in 1798, tells of a mariner who killed an Albatross which had lead his ship out of icy Antarctic waters; this brougth bad luck and, with the ship is becalmed, The famous ryme I have adapted is below

"Water, water, every where,

And all the boards did shrink;

Water, water, every where,

Nor any drop to drink.

The very deep did rot: Oh Christ!

That ever this should be!

Yea, slimy things did crawl with legs

Upon the slimy sea."

The crew die of thirst and the mariner is fated to live on miserably.

I cannot help but picture deep layers of rotting data in silos across an insurer's systems with CTOs/CIOs urged by the CEO to apply AI across claims before the data is AI ready. Avoid that with

Part 5 of the series: Agentic AI in Insurance Claims: A Practitioner's Reality Check

Insurers are training AI on data that was designed for a different world. The industry knows this. The question is whether anyone has formally assessed the risk before scaling it beyond the reach of human correction. Plus, real-time data to reflect changes relevant to risk must be blended with historical data, e.g., EVs and Climate Change

This article covers:

Not all data covers the same risk

The World moved on; the Data didn’t

The Human Compensation Layer you’re already losing

The Institutional Amnesia Problem

Why standard Remedies don’t treat the Problem

The Liability Question needs a Clear Answer

The Question that Reframes Everything

Introduction

Somewhere in every insurer's infrastructure sits an archive. Decades of expired policies, settled claims and closed accounts. Data that was perfectly valid when it was captured, served its commercial purpose, and was never looked at again.

Until someone needed training data.

The volume requirements of machine learning have created a demand that nobody anticipated when these records were filed away. To build fraud detection models, claims classification engines, and pricing algorithms, AI teams need hundreds of thousands, sometimes millions, of records. That means reaching back across ten, fifteen, or twenty years of historical data and pulling it into active service as the foundation for systems that will make decisions today.

This is where a fundamental question emerges that most AI programmes haven't formally addressed: was that data ever assessed for fitness for this purpose?

This isn't a hypothetical. Insurers are already here. According to the KPMG 2025 Insurance CEO Outlook, 73% of insurance CEOs rank AI as a top investment priority, with 67% planning to allocate 10–20% of their budgets towards it [11]. The Bank of England's 2024 survey found that the insurance sector reported the highest AI adoption among financial services sectors at 95% [7]. But that headline figure deserves scrutiny. It encompasses everything from a chatbot handling policy enquiries to a machine learning model making coverage decisions on historical claims data. As explored earlier in this series, the industry's definition of what constitutes "AI", and particularly "agentic AI", remains fluid enough that the adoption figure tells you less than it appears to [12]. A document summarisation tool and a fraud detection model trained on fifteen years of claims history both count as "AI adoption," but only one of them is consuming historical data at a scale where fitness matters. The industry isn't deciding whether to deploy AI on historical data. It's already doing it, though often with less clarity than the adoption statistics suggest about which deployments pose a genuine data fitness risk and which don't.

The question, then, is not whether insurers should be cautious. It's whether the programmes already underway have assessed a risk that sits beneath the models, the governance frameworks, and the vendor assurances: the fitness of the data itself.

In every platform integration I've delivered over nearly three decades of enterprise architecture, data access and validation have been among the first and hardest problems to solve. It's never straightforward, regardless of the technology involved. But the consequences used to be contained. Bad data in a traditional integration meant a failed process, a system error, or a support ticket. When the integration feeds an AI that's making coverage decisions or flagging fraud, the consequence is a regulatory breach or a liability exposure that surfaces years later. Same root problem. Fundamentally different risk profile.

1. Not All Data Carries the Same Risk

It's important to be precise about which data we're talking about, because the instinctive response to "insurer data quality" is often either defensive ("our data is fine") or fatalistic ("it's all terrible"). Neither is accurate.

Active policy and claims data are reasonably reliable. Customers have a contractual and legal obligation to inform their insurer of material changes during a policy period. If you change your address, change your vehicle, or alter the use of a commercial property, you're required to report it because failing to do so risks voiding your cover. At renewal, the data gets revalidated. This isn't perfect, but it's actively managed through normal commercial cycles.

The problem sits in two other categories of data that AI systems routinely consume.

The first is historical archive data. Expired policies and settled claims that were accurate when captured, but haven't been touched since closure. A motor claim record from 2013 reflects the regulatory environment, repair cost landscape, fraud patterns, and vehicle technology of 2013. Using it to train a 2025 fraud detection model assumes those patterns remain relevant. Nobody has validated that assumption, because nobody had a reason to revisit expired records until AI created the demand for volume.

The second is data that was transformed during core system migrations, acquisition integrations, or platform changes. Every insurer, regardless of size, has undergone multiple generations of core systems. Each migration involved data transformation decisions: field mappings, taxonomy consolidation, and format conversions. Someone decided how "Property Type A" in the old system mapped to the new classification. Someone decided that truncating a 200-character description field to 80 characters was acceptable. Someone decided how to handle duplicate customer records when two books of business were merged after an acquisition.

Those decisions shaped the data that exists today. And in many cases, the people who made them are no longer available to explain why. Staff involved in a 2012 migration have moved on, retired, or left the company entirely. Documentation from the programme may sit in project repositories that have themselves been decommissioned or migrated.

Large-scale migration and integration projects in insurance are routinely and sensibly delivered with external implementation consultancies and contract specialists. That's the right commercial model. They bring expertise the insurer doesn't need permanently; the programme is delivered without hiring staff for a short-term need; and the consultancy moves on to the next engagement. But the engagement model was never designed to preserve granular decision history for a decade. Nobody imagined those data transformation decisions would matter again. The edge-case mapping choices, the judgment calls about records that didn't fit neatly into the new schema: that knowledge naturally left with the people who held it. Not because anyone failed in their responsibilities, but because the model was designed to deliver working systems, not to serve the needs of AI teams ten years later.

The AI training problem concentrates on these two categories. The volume demands of machine learning force insurers to use data they would otherwise never look at again, and the variance compounds as you reach further back in time.

And the problem is not historical. It's actively being recreated. The KPMG 2025 Insurance CEO Outlook found that 50% of insurance CEOs expect to undertake high-impact M&A in the next three years, a higher proportion than any other sector [11]. Every one of those acquisitions will produce a new round of data transformation decisions: field mappings, taxonomy consolidation, duplicate resolution, and schema compromises. The same choices that shaped the data insurers are now trying to train AI on will be made again, under the same commercial pressures, with the same engagement models. The difference is that this time, the data won't sit dormant in an archive for a decade before anyone cares about its provenance. AI teams will be consuming it almost immediately, and the decisions made during integration will directly shape the models within months, not years. If the industry hasn't solved the provenance problem for data migrated in 2014, it's reasonable to ask whether it will solve it for data migrated in 2026.

2. The World Moved On. The Data Didn't.

The migration problem is at least understood in principle, even if it's rarely addressed. But historical archive data presents a more subtle challenge because it wasn't badly captured or poorly handled. It was perfectly valid commercial data at the time. The problem is temporal: the world changed around it.

Consider a property risk model trained on data from before the introduction of Energy Performance Certificate requirements. The regulatory framework for energy performance didn't exist when those policies were written. The AI is now operating within requirements that its training data entirely predates. Or fraud detection models trained on claims from before the rise of organised crash-for-cash networks [1]. The patterns the model learned may reflect a fraud landscape that no longer exists.

Even something as straightforward as customer contact data illustrates the problem. Records from a decade ago may lack an email address, a mobile number, or an online account identifier because those fields didn't exist in the system when the record was created. The data isn't wrong. It's just incomplete by modern standards, reflecting a world that has since added entire channels of customer interaction.

The temporal problem also works in the other direction. The risk landscape is now generating real-time data at a scale and granularity that didn't exist when most historical training sets were assembled. Telematics devices stream driving behaviour continuously. Connected home sensors report water leaks, temperature anomalies, and security events as they happen. Satellite imagery and weather modelling provide flood, subsidence, and storm exposure data that updates by the hour, not the decade. Electric vehicle adoption is rewriting the motor claims profile: different repair costs, injury patterns, total loss thresholds, and fraud vectors. Climate change is shifting geographic risk in ways that make a decade of historical property claims data for a given postcode actively misleading about what the next decade will look like.

This data exists. Some insurers are already ingesting it. But the harder question is how you blend it with historical records, and what weighting you apply to each. A fraud detection model trained on fifteen years of combustion engine claims needs to account for the fact that EV claims behave differently, but EVs still represent a minority of the current book. Do you weigh recent EV claims data more heavily to reflect the direction of travel, or do you let the historical volume dominate because it's statistically larger? A property risk model covering coastal postcodes needs to reflect accelerating erosion and changing flood frequency, but how much weight do you give three years of worsening climate data against twelve years of relatively stable loss history?

There is no industry consensus on this. Actuaries have always dealt with trends in historical data, but the pace and scale of change in areas like EV adoption, climate exposure, and digitally enabled fraud patterns are outrunning the traditional approach of gradual trend adjustment. The risk is that insurers treat real-time data as a supplementary enrichment layer rather than as a fundamental reweighting of the training set. If your model still draws 80% of its learning from pre-2018 records and treats real-time feeds as a corrective overlay, you haven't solved the temporal fitness problem. You've added a veneer of currency to a foundation that's still anchored in a world that no longer exists.

None of this is the insurer's fault in any traditional sense. You can't reasonably criticise a company for not maintaining data on expired policies. But you can ask whether they assessed the fitness of that historical data when they repurposed it for a fundamentally different function.

The data isn't garbage. It's retired. And someone brought it back into active service for a purpose it was never designed for.

3. The Human Compensation Layer You're Already Losing

For decades, experienced insurance professionals acted as an informal error-correction mechanism for exactly these kinds of data limitations. Not through any formal process, but through accumulated knowledge that was never documented as a data quality function.

A claims handler with fifteen years of experience doesn't just process what the system shows them. They interpret it. They know which data sources have quirks. They recognise when a historical pattern doesn't match current reality. They question outputs that feel wrong, even when the data technically validates. This wasn't called data quality assurance. It was called experience and judgment. But it was effectively a human middleware layer that compensated for data that was adequate for systems but inadequate for decisions.

As explored in the first article in this series, that layer is disappearing [2]. The experienced handlers retiring aren't just taking claims processing capacity with them. They're taking the institutional knowledge that compensated for data limitations: which migration introduced errors, which product line has unreliable historical records, which classification taxonomy changed and when.

This creates a dangerous intersection. AI removes the human compensation layer at precisely the same time the talent crisis removes the humans who provided it. What remains is an autonomous system processing historical data at face value, with nobody in the loop who knows enough to question what it's being fed.

The loss of this layer would matter less if the organisation's collective memory could fill the gap. In most cases, it can't.

4. The Institutional Amnesia Problem

The standard framing of this problem is that the AI and operations teams don't communicate well enough. That's true, but it understates the issue. The deeper problem is that the knowledge may no longer exist anywhere in the organisation.

Data scientists building models assess datasets for statistical properties such as completeness, distribution, outliers, and class balance. Good teams also monitor for data drift, detecting when the statistical distribution of incoming data diverges from the training set, and retrain models periodically. These are standard practices, and they catch a meaningful class of problems. But they operate on statistical signals. They can detect that the distribution has shifted. They can't tell you that 40% of the training records predate a major regulatory change, that the claims classification taxonomy was revised twice during the period the data covers, or that commercial lines data absorbed from an acquisition carries a duplicate entity rate that was never resolved because it didn't affect day-to-day operational processing.

The distinction matters. Statistical monitoring catches quantitative drift. The problems described in this article are semantic: changes in meaning, context, and the regulatory environment that leave the data looking statistically normal yet substantively misleading. A fraud model trained on pre-2015 motor claims data might appear statistically sound while encoding patterns from a world where organised crash-for-cash networks hadn't yet reached their current scale [1].

That semantic knowledge used to live in the heads of people who worked with the data for years. But large insurers have been through decades of staff turnover, restructuring, and organisational change. The people who built the migration mapping tables, who understood the edge cases in the taxonomy consolidation, who knew which data sources had reliability issues: many have since retired, moved into roles that no longer touch data, or left the industry entirely. The project documentation from major platform changes may sit in SharePoint instances that have themselves been migrated or decommissioned. The same is true for externally delivered programmes, where specialist knowledge naturally departed with the consultancies and contractors who delivered the work. As discussed earlier, not through any failing, but as a structural characteristic of how large technology programmes are sensibly resourced.

The result is that an AI team in 2025, working with data that was migrated during a programme in 2014, has essentially no way to understand the transformation decisions that shaped their training data. They can see what the data looks like. They can't see why it looks that way, or what was lost, consolidated, or approximated in the process.

This isn't a silo problem you can fix with better cross-team communication. It's an institutional amnesia problem. The organisation is building AI on data whose full provenance it can no longer reconstruct.

5. Why Standard Remedies Don't Reach the Problem

The industry understands that training data quality matters, and there are emerging approaches to address it. Synthetic data generation, for example, is gaining traction in insurance to augment incomplete or biased datasets while maintaining privacy compliance [9]. The premise is sound: if your historical data is sparse or unrepresentative, you can generate additional records that preserve the statistical properties of the original set or use AI itself to generate plausible new data from learned patterns.

But this introduces a validation problem that mirrors the one it's trying to solve. Synthetic data, whether generated statistically or by AI, needs to be verified as correct and contextually valid for the domain in which it will be used. Someone must confirm that the generated motor claims data reflects how fraud presents today, not how it presented in the source records from 2014. Someone must check that the synthetic property risk profiles account for regulatory requirements that didn't exist when the seed data was captured.

There's also a more fundamental problem: synthetic data is too clean. Generated records conform to the statistical patterns in the source data, producing idealised representations of how claims, policies, or customer interactions should look. But real-world insurance data is messy in ways that matter. People are not predictable and don't conform to an idealised view of the data we need. Ten different policyholders will describe the same road traffic accident in ten different ways. Free text fields, which carry some of the most valuable information in a claim, are full of inconsistency, ambiguity, regional idiom, and the kind of natural variation that a generation model smooths away.

Real claims data carries inherent human bias that synthetic data struggles to reproduce authentically. In insurance, claimants have a natural and understandable tendency to present events in a favourable light: "it wasn't my fault," "the damage was already there," "I reported it immediately." Experienced handlers read through this instinctively. They know what a narrative that's subtly shaped looks like versus one that's straightforwardly factual. That signal sits in the texture of the data: the phrasing, the omissions, the sequence of events as described versus as they likely occurred. A model trained on sanitised synthetic records that lack this human messiness will encounter it immediately in production and have no framework for interpreting it.

Even taking a statistically reasonable sample for validation involves reviewing a significant volume of records. And who performs that review? The data scientists who built the generation model are well placed to assess statistical fidelity, but they typically lack the domain expertise to judge whether a synthetic claims record is contextually realistic. Is the messiness, right? Are the bias patterns authentic? Does the free text sound like something a real claimant would say? The people who do have that expertise, experienced claims handlers, underwriters, compliance professionals, are the same population that is leaving the industry, already stretched across operational demands, and in some cases, understandably cautious about actively helping to build systems that reduce the need for their own roles.

This is the human compensation layer problem resurfacing in a different form. The industry's proposed remedy for flawed training data depends on the same scarce domain expertise that the AI deployment is simultaneously displacing. Synthetic data addresses volume and privacy constraints. It does not address the semantic fitness question this article raises, and its own validation requirements create a dependency on exactly the human knowledge that is disappearing.

Model monitoring and validation frameworks, including periodic retraining, A/B testing against human decisions, and distribution shift detection, are similarly valuable and necessary. But they are designed to detect when a model's performance degrades relative to measurable outcomes. In insurance, the feedback loops are slow: a fraud model's errors may not surface for months or years, and a claims classification model's biases may only become apparent through regulatory review or customer complaint patterns. By the time the monitoring catches the problem, the exposure has already accumulated.

The gap is not in the tooling. It's in the question that the tooling is designed to answer. Standard ML practices ask: "Is the model performing as expected given the data?" The question that remains unasked is: "Was the data ever fit for this purpose in the first place?"

6. The Liability Question That Needs a Clearer Answer

Under the FCA's Consumer Duty, firms must demonstrate they are delivering good customer outcomes [3]. The SM&CR creates personal accountability for senior managers overseeing the areas in which AI is deployed [4]. In January 2026, the Treasury Select Committee went further, concluding that regulators risk exposing consumers and the financial system to "potentially serious harm" through insufficient oversight of AI in financial services, and recommending the FCA publish specific guidance on SM&CR accountability for AI-related harm by the end of 2026 [8]. Days later, the FCA launched the Mills Review into the long-term impact of AI on retail financial services, with a specific focus on agentic AI and its implications for consumer outcomes, signalling that the regulator is now actively examining exactly these questions [10].

I'm not a lawyer, and I won't make legal claims about what constitutes negligence or liability. But the combination of known data limitations, autonomous decision-making, and personal regulatory accountability creates a risk profile that deserves more formal attention than it typically receives. The Bank of England's own 2024 survey found that 46% of financial services firms reported only a "partial understanding" of the AI technologies they use, while 55% of AI use cases already involve some degree of automated decision-making [7]. Most insurers have an AI governance framework that addresses model behaviour and a data governance framework that addresses storage and access. But there is often a gap between the two that nobody clearly owns: the question of whether the data is fit for the specific purpose it's now being used for.

The DQPro survey of the London insurance market found that legacy data quality and outdated processes remain the most significant barrier to data-driven decision-making, with respondents explicitly connecting this to challenges in AI adoption [5]. A separate Adacta survey of European insurers found that nearly two-thirds are actively modernising core systems, with policy administration and claims systems the top priorities [6]. The industry knows the problem exists.

The honest reality is that most insurers will, and arguably should, proceed with AI deployment despite knowing the historical data hasn't been fully validated. The commercial pressure is real and legitimate. Competitors are deploying. The efficiency gains are measurable. The talent crisis means manual processes are becoming unsustainable regardless. A CEO who pauses their entire AI programme to conduct a comprehensive historical data audit will lose ground to competitors who didn’t and may never recover that position. That's not recklessness. That's commercial reality in a competitive market.

But proceeding blindly is not the same as proceeding. The question isn't whether to deploy. It's whether the organisation understands which of its AI deployments are consuming data with known fitness limitations, and whether it has a plan to manage that exposure as it scales. Every decision the AI makes on data designed for a different world extends the liability, and unlike human-mediated processes, where experienced professionals provide an informal correction layer, automated systems compound errors at a speed and scale that don't self-correct.

7. The Question That Reframes Everything

The question isn't whether insurer data is "clean." That framing misses the point. The data was clean enough for its intended purpose, and the industry handled it sensibly. Active policies were maintained through commercial cycles. Expired records were archived. Migration programmes were delivered professionally. None of those decisions was wrong.

What changed is the purpose. AI repurposes retired data for a function it was never designed to serve, and the gap between "fit for human-mediated processes in 2012" and "fit for autonomous decision-making in 2025" has not been formally closed in most organisations.

Insurers, consultancies, and insurtechs are working on this problem. Data governance frameworks are maturing. Model validation practices are improving. Chief Data Officers are being appointed with broader mandates. Some vendors and consultancies will claim they've solved it, that the right platform, the right data layer, the right governance toolkit closes the gap entirely. Those claims deserve scrutiny. A one-off data-cleansing exercise won't address an inherently ongoing problem: the historical archive is static, while the world it's applied to continues to change. Synthetic data augmentation shifts the validation burden onto domain experts who are already scarce. Standard model monitoring catches statistical drift, but not the semantic drift described in this article. And no platform can reconstruct the institutional knowledge of data transformation decisions that have naturally dispersed over time.

What's needed is not a product or a project but a capability: an ongoing function that assesses data fitness for AI use, sits between the data governance team and the AI team, and has access to domain expertise, not just data science expertise. It would answer questions like: how far back does our training data go, and what changed in the regulatory or commercial environment during that period? Which datasets were shaped by migration decisions we can no longer trace? Where are we training models on data from acquired books of business that were integrated under time pressure, with compromises nobody documented?

Nobody has built this capability fully. The honest answer is that the industry is still working out what it looks like: who staffs it, where it sits organisationally, what it produces, and how it keeps pace with both the changing world and the expanding appetite for historical data. But the insurers who will navigate this best are not the ones who claim the problem is solved. They're the ones who have identified it as a standing risk, built it into their AI governance, and started asking the right questions of their data before the regulator does.

Assessing data fitness for AI isn't a project. It's an ongoing capability. And building it is now a competitive priority, not a compliance afterthought.

Next in series: The Measurement Problem. If the training data is unreliable, what exactly are you measuring when you assess AI accuracy?

References

[1] House of Commons Library (2024) Tackling crash for cash insurance fraud. CDP-2024-0107. London: House of Commons Library. https://commonslibrary.parliament.uk/research-briefings/cdp-2024-0107/

[2] Brown, C. (2026) 'Agentic AI in Insurance Claims', Agentic AI in Insurance Claims: A Practitioner's Reality Check, Part 1 of 8. https://blog.buildparadox.com/agentic-ai-in-insurance-claims/

[3] Financial Conduct Authority (2024) Insurance multi-firm review of outcomes monitoring under the Consumer Duty. London: FCA. https://www.fca.org.uk/publications/multi-firm-reviews/insurance-multi-firm-review-outcomes-monitoring-under-consumer-duty

[4] Bank of England (2018) Strengthening accountability: Senior Managers and Certification Regime. London: Bank of England. https://www.bankofengland.co.uk/prudential-regulation/key-initiatives/strengthening-accountability

[5] DQPro and The Insurance Network (2024) Navigating data challenges in the London market. London: The Insurance Network. https://www.the-insurance-network.co.uk/tinsights/navigating-data-challenges-in-the-london-market

[6] Adacta (2025) State of Insurance Core System Legacy Modernization: Market Survey 2025. Ljubljana: Adacta. https://resources.adacta-fintech.com/legacy-modernization-survey

[7] Bank of England and Financial Conduct Authority (2024) Artificial Intelligence in UK Financial Services 2024. London: Bank of England. https://www.bankofengland.co.uk/report/2024/artificial-intelligence-in-uk-financial-services-2024

[8] House of Commons Treasury Committee (2026) Artificial Intelligence in Financial Services. Fifteenth Report of Session 2024-26, HC 738. London: House of Commons. Available at: https://committees.parliament.uk/publications/51128/documents/283671/default/

[9] OECD (2020) The Impact of Big Data and Artificial Intelligence (AI) in the Insurance Sector. Paris: OECD. See also: Gartner (n.d.) estimate that 85% of algorithms used in insurance would be affected by data bias. https://www.oecd.org/content/dam/oecd/en/publications/reports/2020/01/the-impact-of-big-data-and-artificial-intelligence-ai-in-the-insurance-sector_1fb2946f/c822ee53-en.pdf

[10] Financial Conduct Authority (2026) Review into the long-term impact of AI on retail financial services (The Mills Review). Call for Input. London: FCA. Available at: https://www.fca.org.uk/publications/calls-input/review-long-term-impact-ai-retail-financial-services-mills-review

[11] KPMG (2026) 2025 Insurance CEO Outlook. London: KPMG. https://kpmg.com/xx/en/our-insights/ai-and-technology/kpmg-global-ceo-outlook-insurance.html

[12] Brown, C. (2026) 'Agentic AI: When Nobody Can Define It, How Do You Govern It?. https://blog.buildparadox.com/agentic-ai-when-nobody-can-define-it-how-do-you-govern-it/

One application running on SunGPT is Single View of Claim, a generative AI tool that consolidates communications, building documents, and case notes into a unified claims summary and recommends next steps for resolution. The tool – accessible to about 1,500 claims staff – reduces per‑claim review time by between five and 30 minutes, depending on complexity.

unknownx500

unknownx500